With Urs Holzle speaking how could I miss the Google Cloud, Data for Sustainability, event in Auckland last week? In this post I’ll review the presented topics of Google Earth Engine, renewable energy for Google Cloud’s datacenters and how Google researcher’s datasets are providing location based insights for ESG progress.

To start, the Google office in Auckland is fantastic. Located by the waterfront, parking is easy and the view is sublime. A commissioned Maori design decorates the office and the slides, not to mention a Karakia to begin the well attended hui.

Urs is first to speak and my key takeaway, something I wasn’t aware prior, is that Google Cloud is 100% offset for carbon since 2007! The video below is a breakdown of renewable energy throughout the day at various sites for GCP datacenters. Some data centers such as those in Singapore have zero percent renewable energy input so need to be completely offset. It is commendable that Google have considered the environmental impact of their datacenters from early on. You can read more about that here.

The goal for Google is to be 24/7 carbon-free, which means that renewable energy needs to be generated or stored sufficient to power the datacenters when the wind isn’t blowing or the sun isn’t shining, or perhaps in NZ’s case, the lakes are empty… This goal should be achieved by 2030.

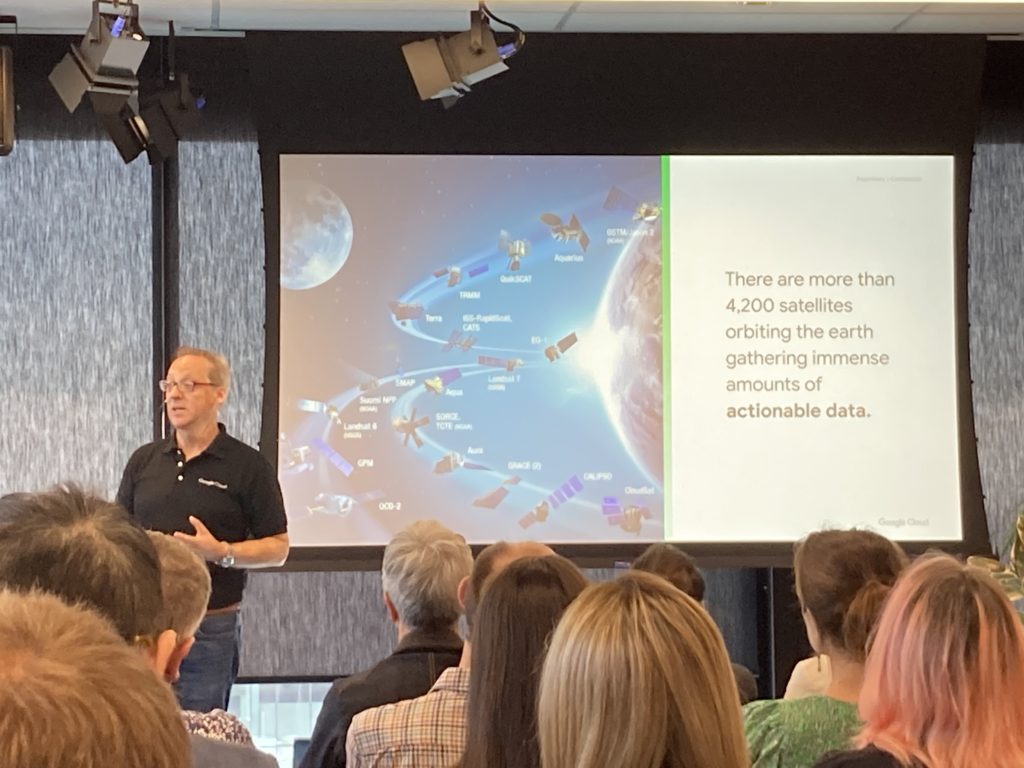

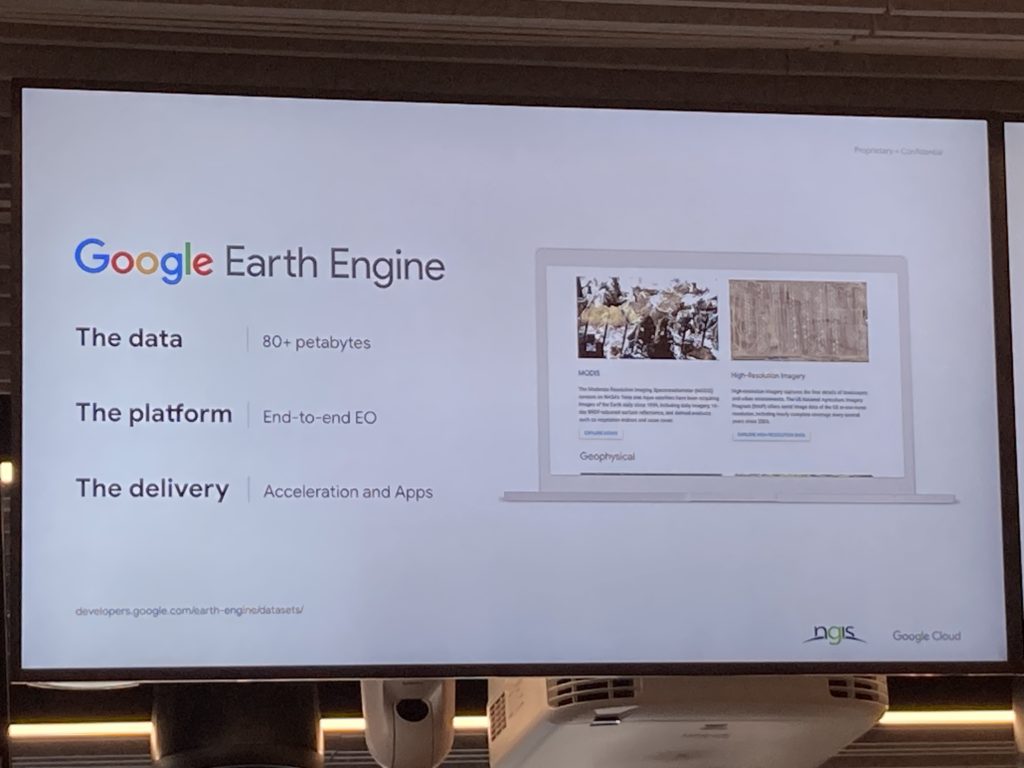

Graeme Merrall spoke next and discussed how Google Earth Engine is the most complete dataset of environmental geospatial location data. Satellites both public and private are collecting vast quantities of data and some of these examine carbon and greenhouse gasses, (I guess using spectrometry).

Google Earth Engine claims to have the largest and most complete datasets of geospatial environmental data in the world. It also claims to have models and predictive capacity developed by researchers at the the forefront of environmental analysis and prediction. An example given was a predictive model running on GCP in 7 days that would’ve taken a typical computer over 1200 years to complete.

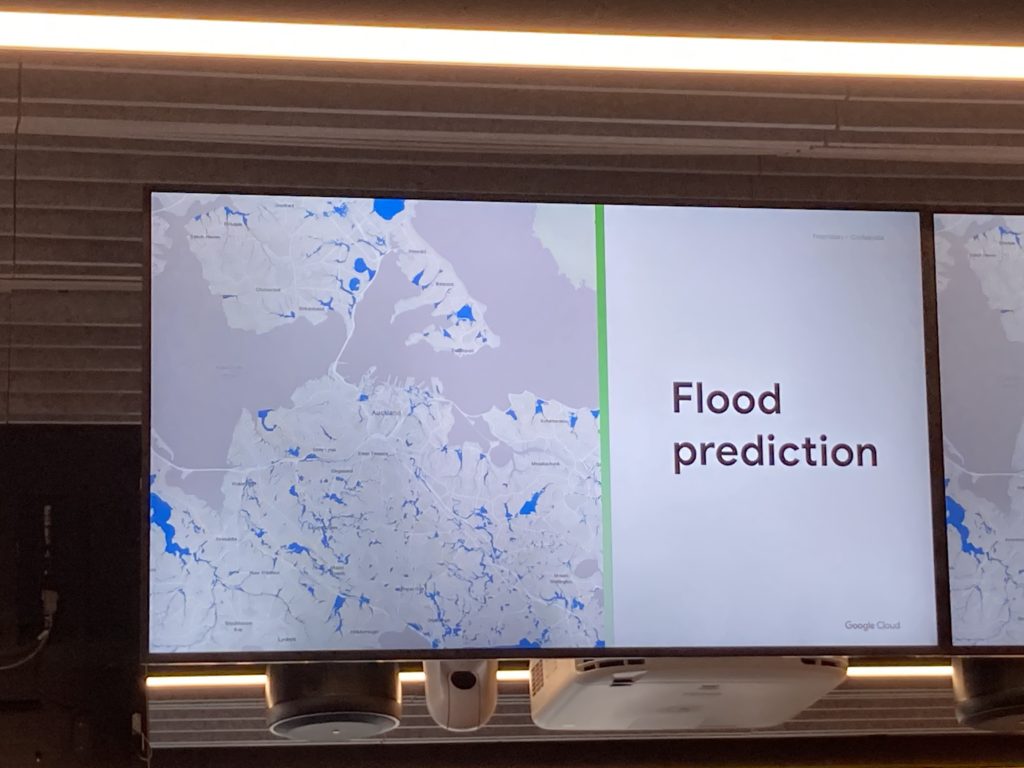

Nathan Eaton spoke next with specifics of how these datasets are applied to localized entities for the purpose of risk, insurance and ESG priorities for private firms. Flood protection is topical with the Auckland Anniversary weekend flood event and cyclone Gabriel causing significant damage in the area.

Mortgages are held over many of the damaged properties indirectly exposing banks and insurers are exposed directly through coverage of exposed assets. A recent article in Stuff suggests a large proportion of Auckland’s properties are susceptible to flood risk with sea level rise between 50cm and 1m predicted by the end of the 21st century.

Producers are not immune to the effects of climate change, as the video below shows, the shrinking of the Aral Sea in Central Asia, once employed for fishing but now a poster child of ecological collapse.